Additional Insights on Languages from our NDSS 2018 paper

Feb 20, 2018

This week, I presented our paper Didn’t You Hear Me? — Towards More Successful Web Vulnerability Notifications at NDSS in San Diego. Since there are some insights regarding the language of sites that we could not fit into the paper, I want to take this chance to point them out.

Brief Summary

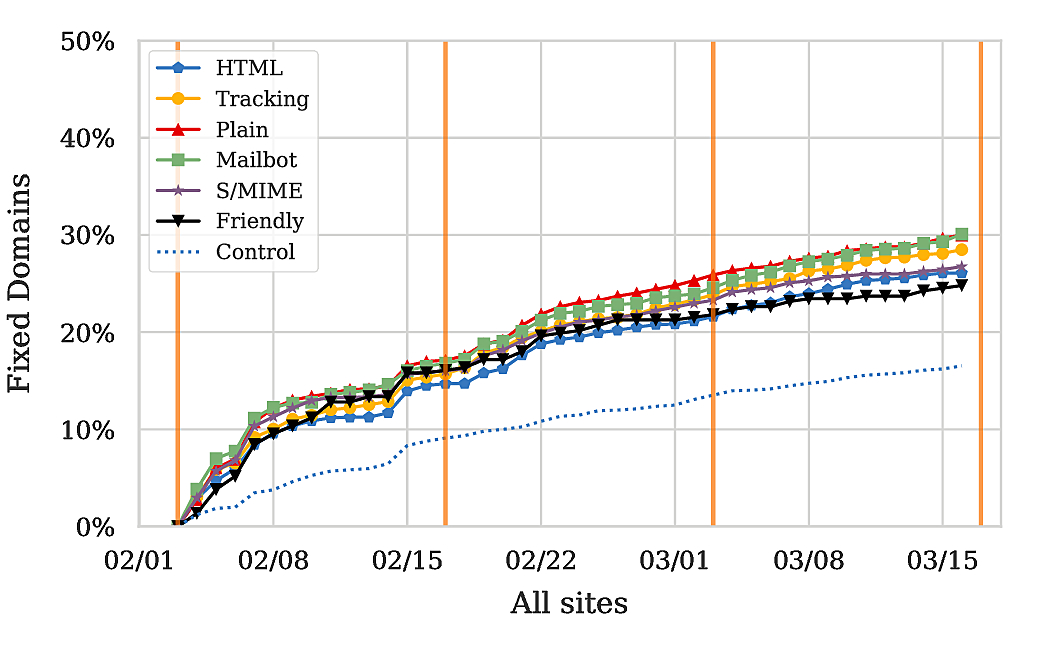

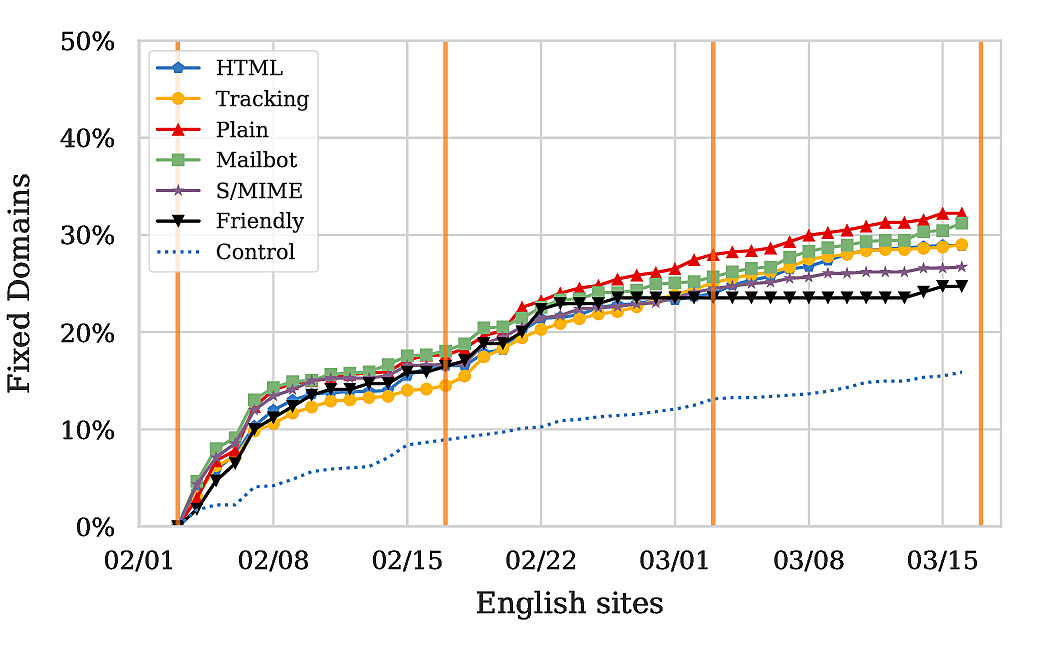

Assuming that the reader is not familiar with the paper, let me briefly summarize it. To understand how notifications can be improved, we experimented with different message formats (e.g., plain v. HTML, signed, …) in our notifications about discovered security issues. Our results on a high level indicated that while we are able to have our emails delivered to the inbox of our “audience” for around 30-40%, only between 16% and 25% of those follow up on the provided information. Furthermore, only a fraction of those then remediated the problems.

Analyzing Language of Sites

Hence, the argument to be made is that there are some factors which inhibit the success of our campaigns, specifically to the trust that people put into the notification message. As was pointed out by a reviewer, this might in parts be related to the nationality of the recipient, or at least to the language they speak. To understand the impact of that, I used Google Translate’s API to determine the language of each of the sites we had notified. Although this was done several months after our initial campaign and sites might have changed owners (and therefore languages) since then, the results highlight some interesting findings. We focus here only on Git, since it had the more visible effect.

Findings

The biggest fraction of all sites we considered is in English. Russian sites come second, with Germans following in fourth. I purposely skip Spanish, since the effect I want to describe mostly applies to German sites.

Given that English sites are the majority (around 50% of all sites), it is no surprise that the graphs for All Sites and English Sites align, yielding about 15% fix rate for the control group and up to around 30% for notified groups. So, without being very accurate about this, I’d argue that our notifications did increase the fraction of fixed sites by around 15% for English speaking sites. Notably though, the numbers for Germany and Russia differ quite a bit from those for English sites.

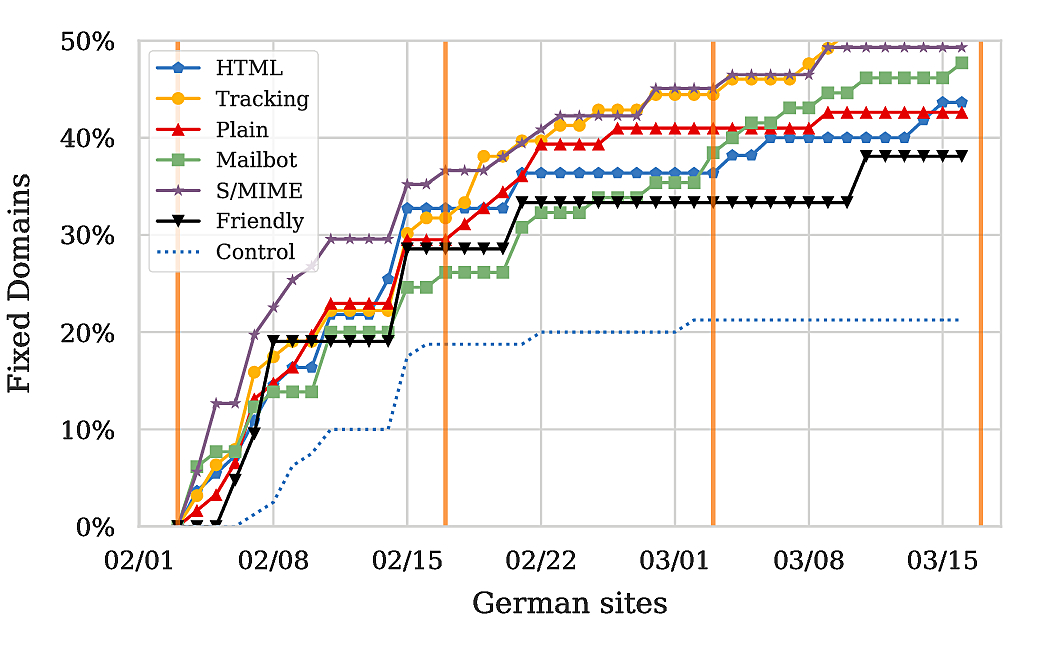

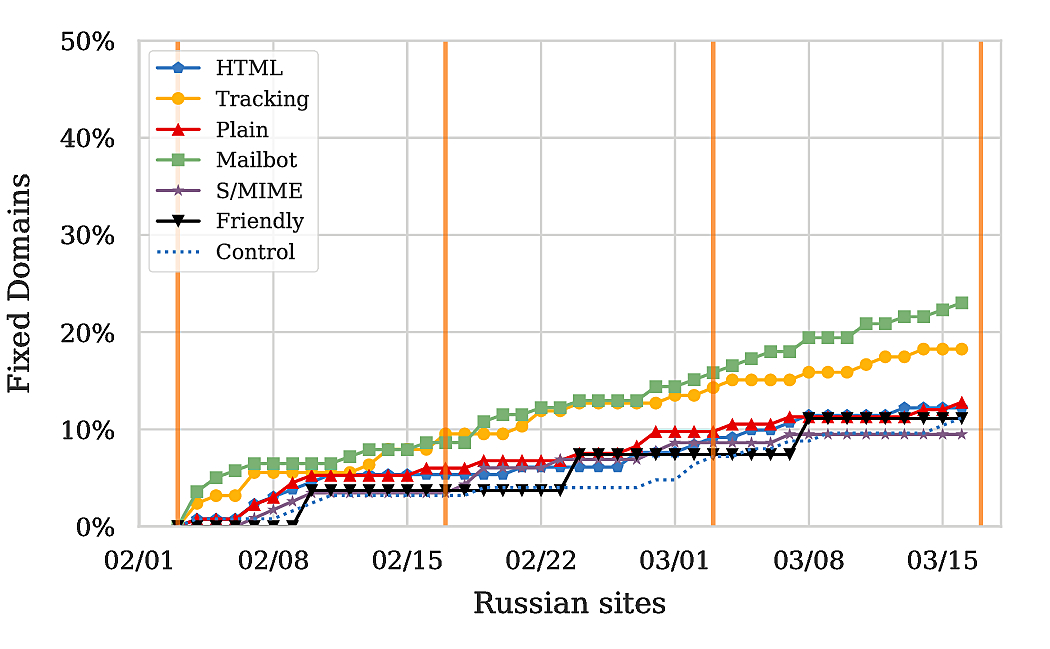

For Germany, we observe that 20% of the sites in the control fixed the issues, whereas for Russian sites the number is only around 10%. Hence, it appears as if the language of the domain is correlated with the general security awareness; or at the very least the frequency with which sites change (given that sites might just have been taken offline altogether). Moreover, if we consider the fraction of fixed domains in our notified set, we find that up to 50% of the sites in Germany remediated, whereas only up to around 22% of the sites in Russia fixed the issue. Looking at the increase in fixed domains, an increase of 15-30% in fix rate can be attributed to our notifications in Germany, and somewhere between 0% and 12% in Russia.9

Conclusions

The insights highlight two issues related to the notifications: language issues and trust in the provided information. As a research institution located in Germany, we are likely well-known in Germany; especially contrasted to the US or Russia. Hence, the distinctive difference for Germany is an indicator that this knowledge leads to higher reaction rates. In contrast, our impact on Russian sites likely suffers from issues related to the language of our notification and the lack of knowledge of CISPA as a research institution. Whatever the exact reasons may be, I believe this highlights some interesting problems going forward on how to improve the trust in our notifications.